|

I am currently a final-year Ph.D student in School of Software, Tsinghua University, advised by Prof. Yu-Shen Liu. I got my bachelor's degree from School of Software, Tsinghua University in 2021. My research interests lie in the area of computer vision and graphics, including multi-view 3D reconstruction and rendering, multimodal conditioned 2D video and 3D representation generation. |

|

|

(* Equal Contribution) |

|

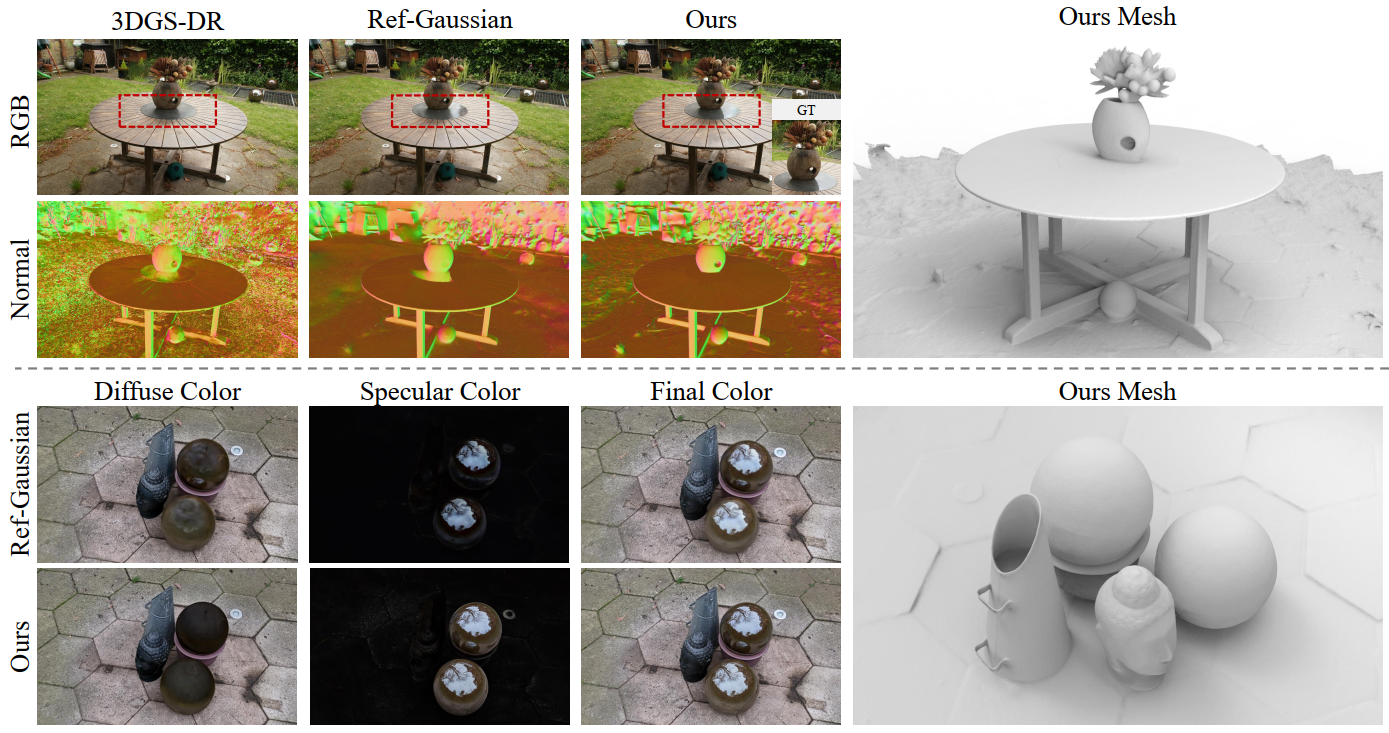

Wenyuan Zhang*, Jimin Tang*, Weiqi Zhang, Yi Fang, Yu-Shen Liu, Zhizhong Han Advances in Neural Information Processing Systems (NeurIPS), 2025 project page | arXiv | code We introduce MaterialRefGS that significantly improves the rendering and reconstruction of reflective scenes in Gaussian Splatting by introducing PBR-based multi-view material consistency constraints and reflection strength prior. |

|

|

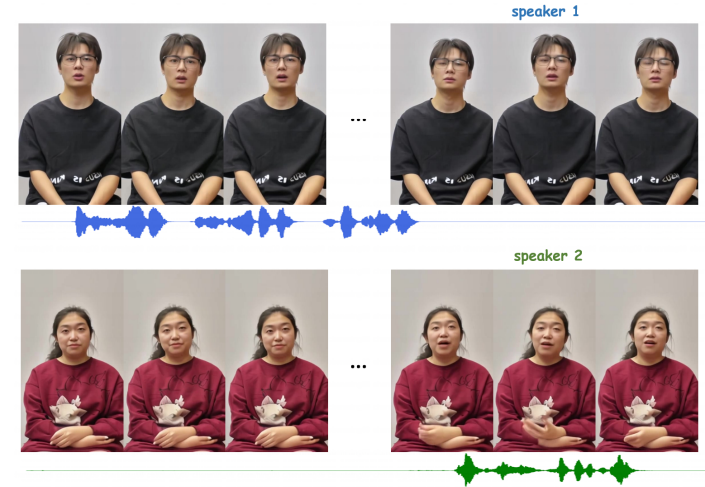

Yikang Ding*, Jiwen Liu*, Wenyuan Zhang, Zekun Wang, Wentao Hu, Liyuan Cui, Mingming Lao, Yingchao Shao, Hui Liu, Xiaohan Li, Ming Chen, Xiaoqiang Liu, Yu-Shen Liu, Pengfei Wan Technical Report. arXiv:2509.09595, 2025 project page | arXiv We introduce Kling-Avatar, a novel framework that unifies multimodal instruction understanding with cascaded long-duration video generation for avatar animation synthesis. |

|

Ming Chen*, Liyuan Cui*, Wenyuan Zhang*, Haoxian Zhang, Yan Zhou, Xiaohan Li, Songlin Tang, Jiwen Liu, Borui Liao, Hejia Chen, Xiaoqiang Liu, Pengfei Wan Technical Report. arXiv:2508.19320, 2025 project page | arXiv We introduce an autoregressive human video generation framework that enables interactive multimodal control and low-latency extrapolation in a streaming manner. |

|

Weiqi Zhang*, Junsheng Zhou*, Haotian Geng*, Wenyuan Zhang, Yu-Shen Liu Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2025 project page | arXiv | code We present GAP, which gaussianizes raw point clouds into high-fidelity 3D Gaussians with text guidance via depth-aware diffusion and surface-anchored optimization. |

|

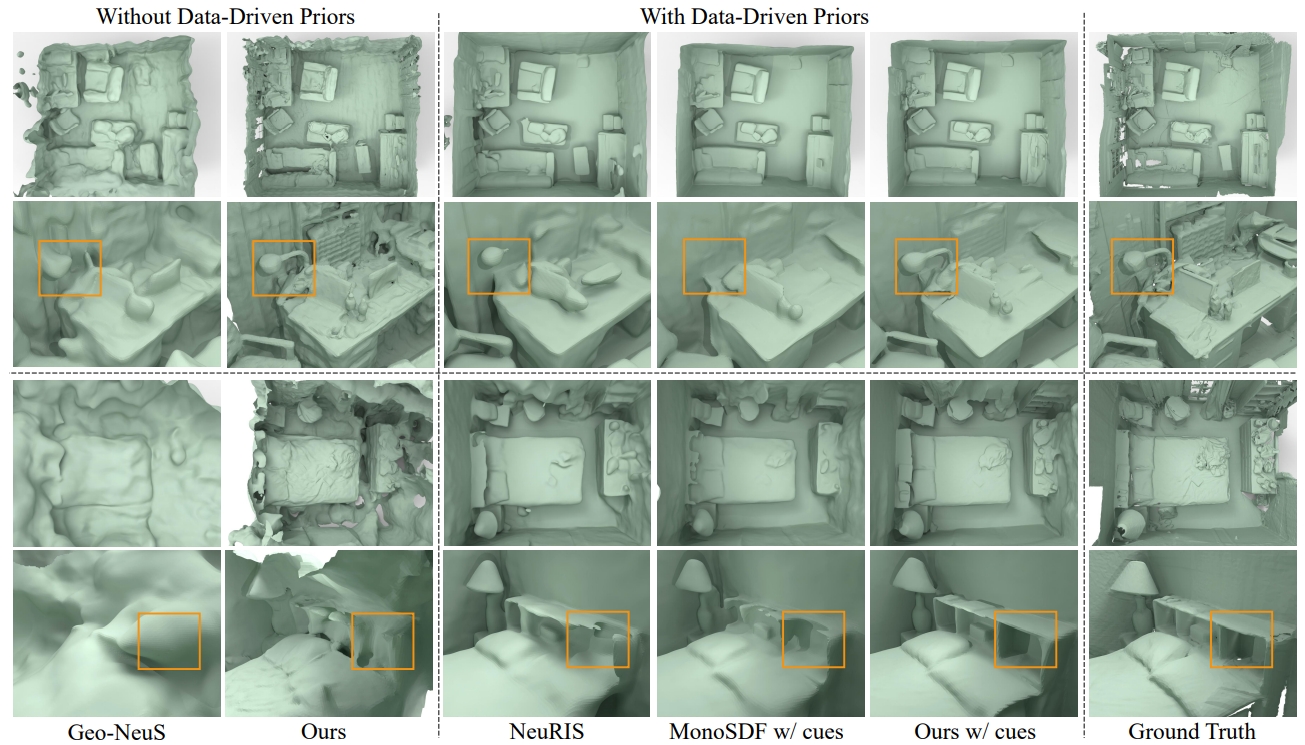

Wenyuan Zhang, Emily Yue-ting Jia, Junsheng Zhou, Baorui Ma, Kanle Shi, Yu-Shen Liu, Zhizhong Han Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025 (Highlight) project page | arXiv | code We present NeRFPrior, which adopts a neural radiance field as a prior to learn signed distance fields using volume rendering for indoor scene surface reconstruction. |

|

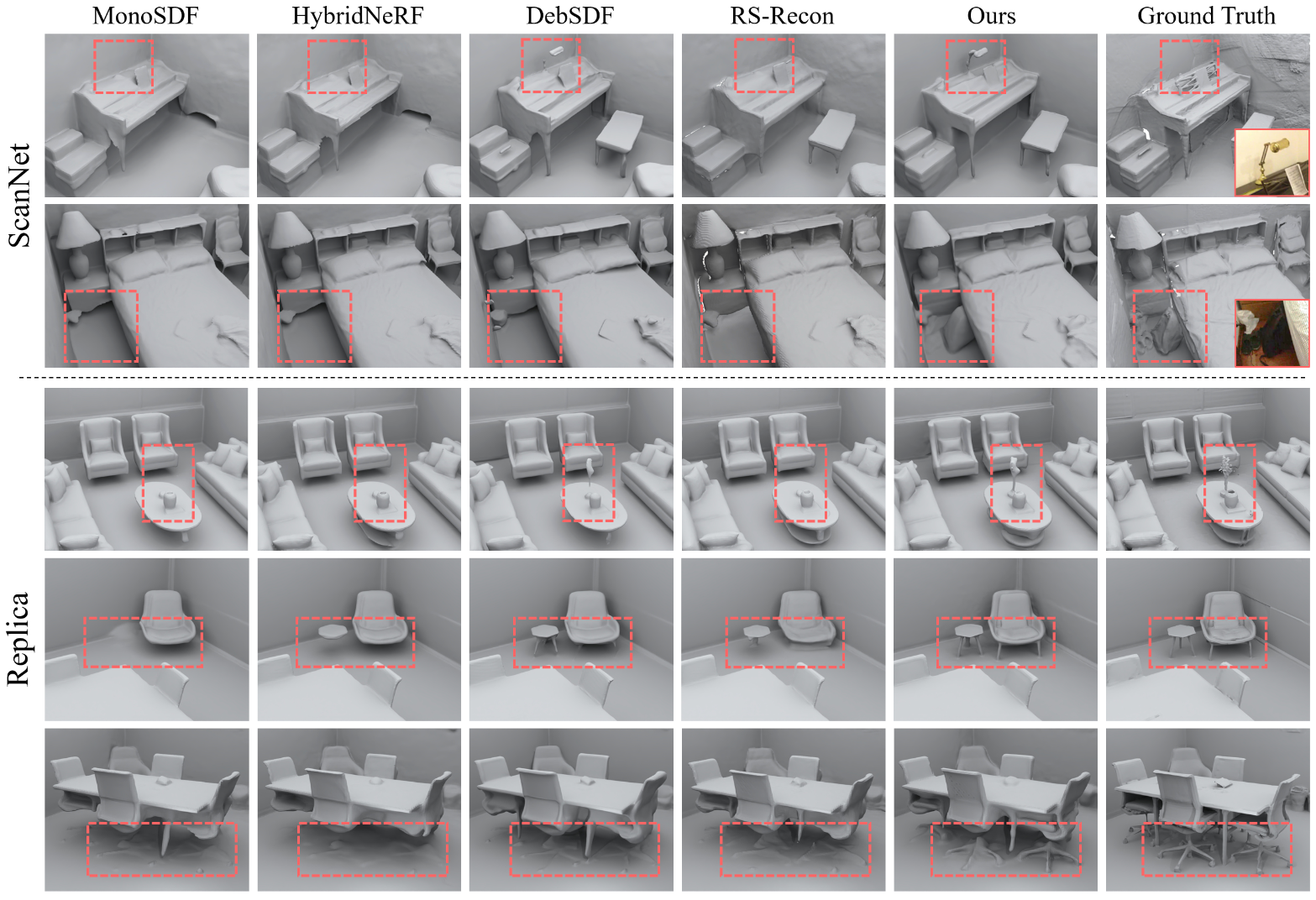

Wenyuan Zhang, Yixiao Yang, Han Huang, Liang Han, Kanle Shi, Yu-Shen Liu, Zhizhong Han Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025 project page | arXiv | code we propose a general approach that explores the uncertainty of monocular depths to provide enhanced geometric priors for neural rendering and reconstruction. |

|

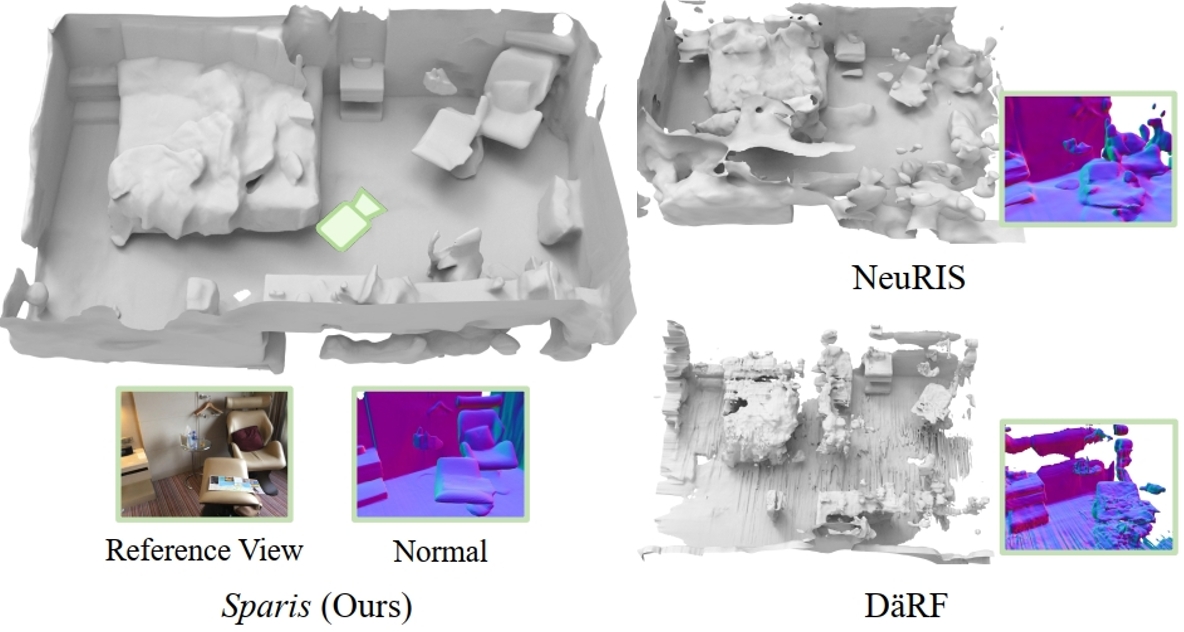

Yulun Wu*, Han Huang*, Wenyuan Zhang, Chao Deng, Ge Gao, Ming Gu, Yu-Shen Liu AAAI Conference on Artificial Intelligence (AAAI), 2025 (Oral) project page | arXiv | code We propose a method that introduces a novel prior based on inter-image matching information for indoor surface reconstruction from sparse views. |

|

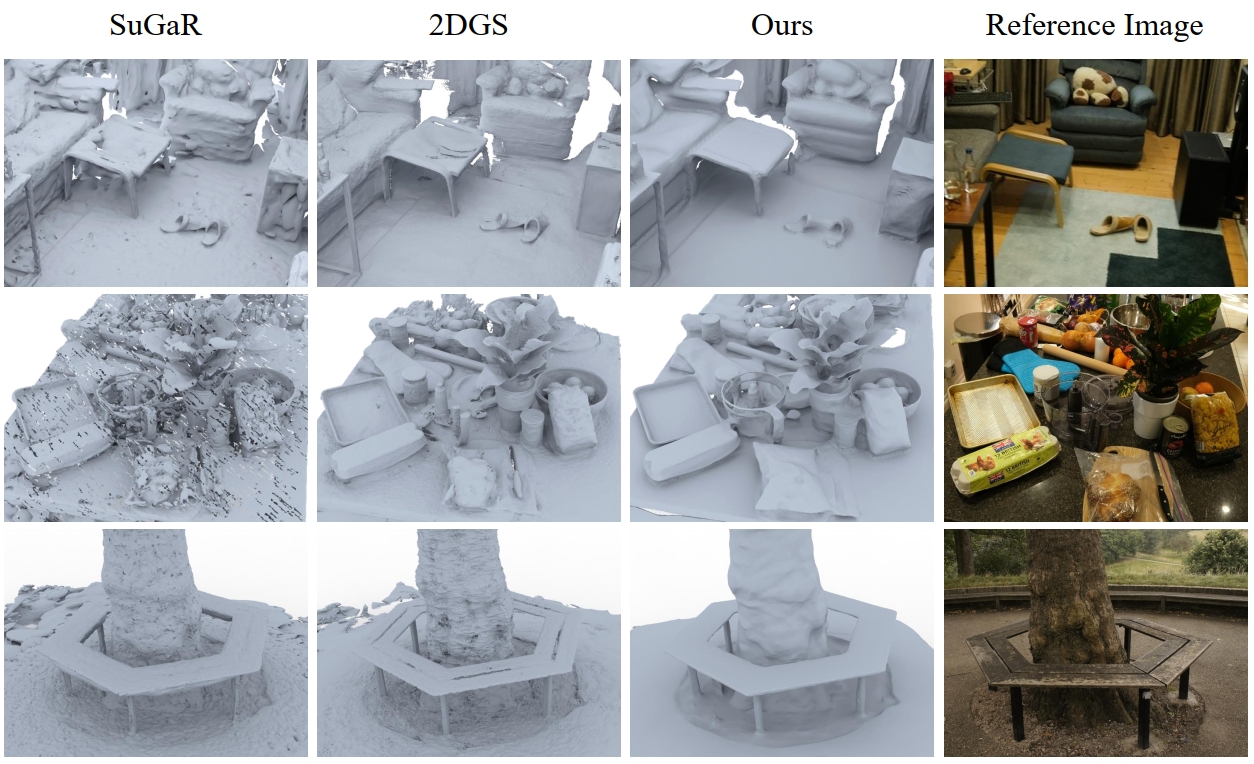

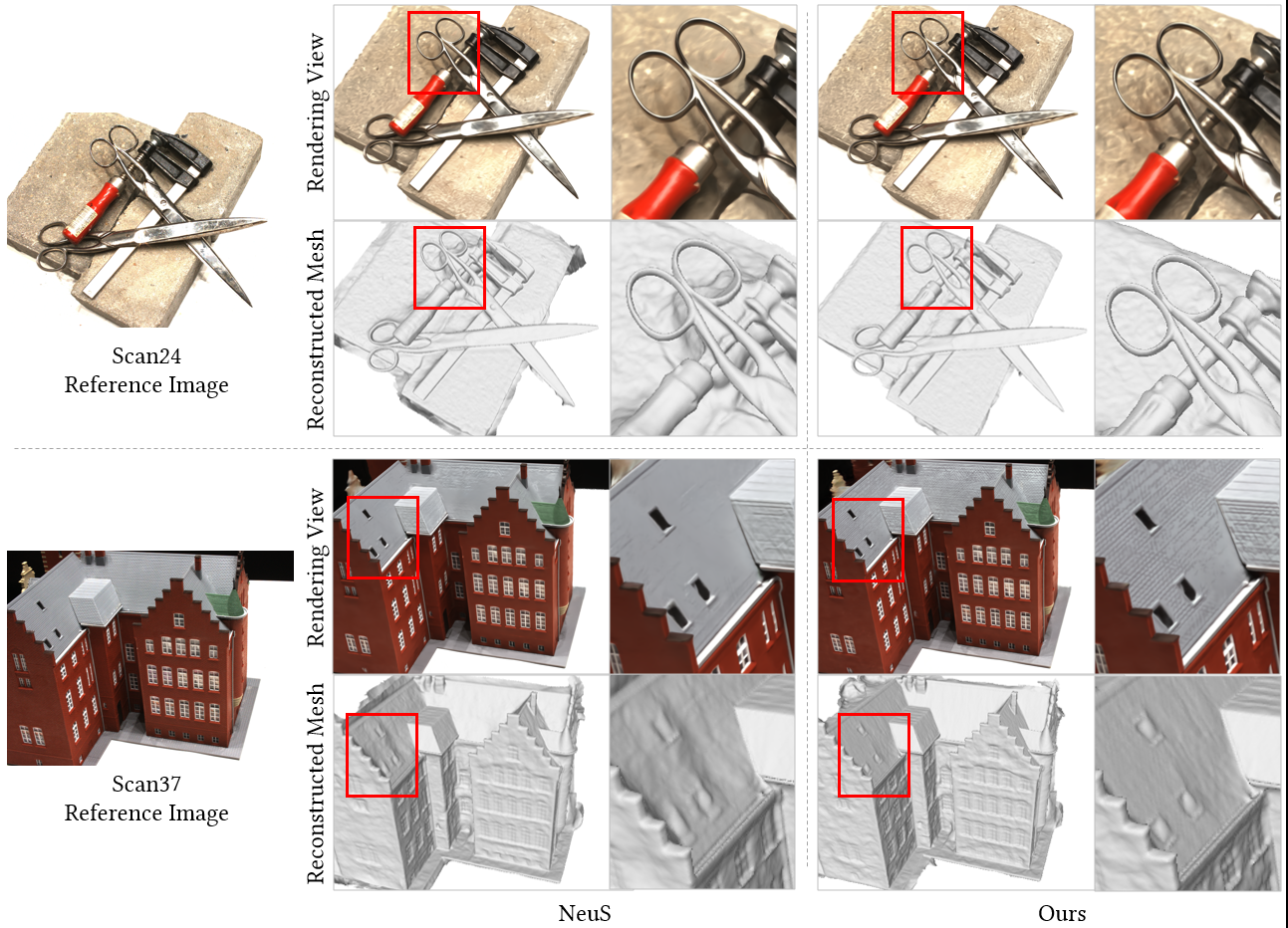

Wenyuan Zhang, Yu-Shen Liu, Zhizhong Han Advances in Neural Information Processing Systems (NeurIPS), 2024 project page | arXiv | code We propose a method that seamlessly merge 3DGS with the learning of neural SDFs. To this end, we dynamically align 3D Gaussians on the zero-level set of the neural SDF. Meanwhile, we update the neural SDF by pulling neighboring space to the pulled 3D Gaussians, which progressively refine the signed distance field near the surface. |

|

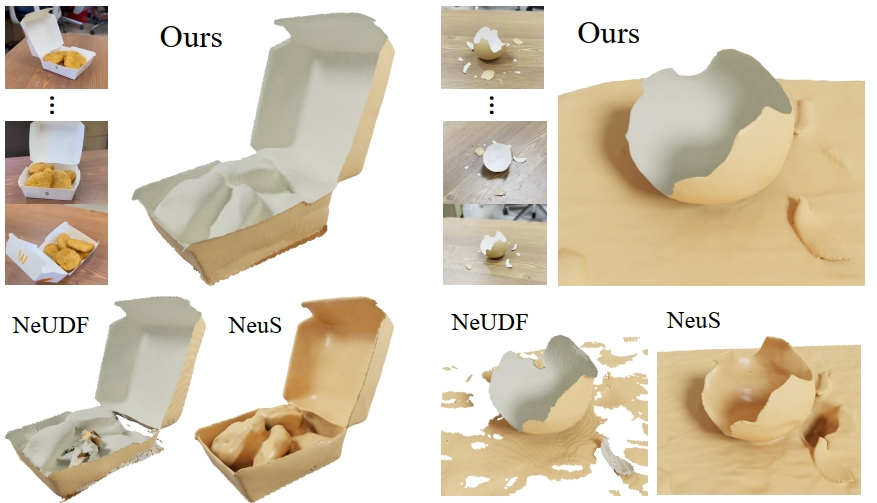

Wenyuan Zhang, Kanle Shi, Yu-Shen Liu, Zhizhong Han European Conference on Computer Vision (ECCV), 2024 project page | arXiv | code We present a novel differentiable renderer to infer UDFs from multi-view images. Instead of using hand-crafted equations, our differentiable renderer is a neural network which is pre-trained in a data-driven manner, dubbed volume rendering priors. |

|

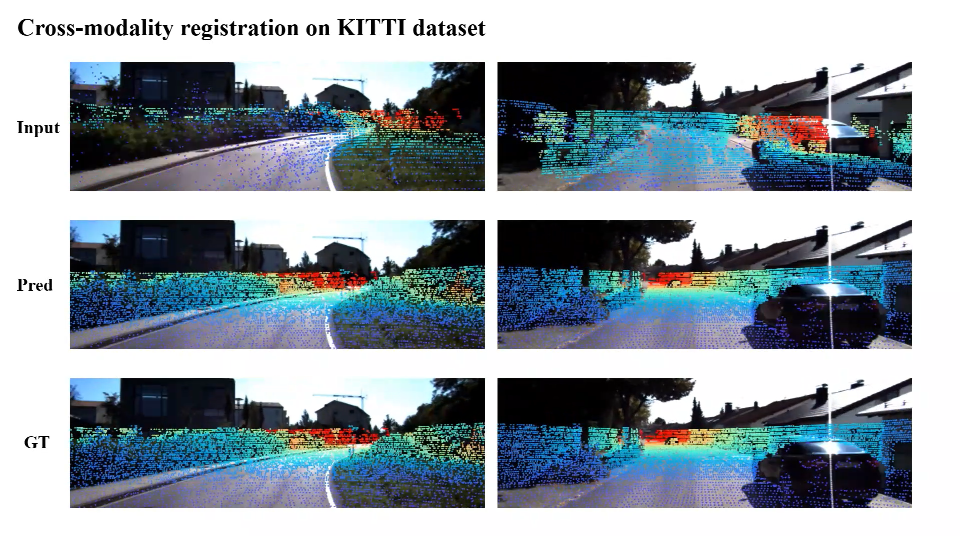

Junsheng Zhou*, Baorui Ma*, Wenyuan Zhang, Yi Fang, Yu-Shen Liu, Zhizhong Han Conference on Neural Information Processing Systems (NeurIPS), 2023 (Spotlight) arXiv | code We design a triplet network to learn VoxelPoint-to-Pixel matching via a differentiable probabilistic PnP solver. |

|

Wenyuan Zhang, Ruofan Xing, Yunfan Zeng, Yu-Shen Liu, Kanle Shi, Zhizhong Han IEEE Transactions on Image Processing (TIP), 2023 project page | arXiv | code We introduce a general strategy to speed up the learning procedure for almost all radiance fields based methods. The key idea is to reduce the redundancy by shooting much fewer rays in the multi-view volume rendering procedure. |

|

|

|

|

template adapted from this awesome website

|